Since you are here, you probably know that time series data is a bit different than static ML data. So when working on time series projects, oftentimes, Data Scientists or ML Engineers use specific tools and libraries. Or they use commonly known tools that have proved to be well adjusted to time series projects.

We figured it would be useful to have those tools gathered in one place, so here we are. This article is sort of a database of time series tools and packages. Some of them are pretty well-known and some may be new to you. Hope you’ll find the whole list useful!

Before we dig into tools, let’s cover some basics.

What is a time series?

A time series is a sequence of data points indexed in time order. It’s an observation of the same variable at successive points in time. In other words, it’s a set of data that has been observed over a period of time.

The data is often plotted as a line on a graph with time on the x-axis and the value at each point on the y-axis. Also, there are four main components of a time series:

- 1 Trend

- 2 Seasonal variations

- 3 Cyclic variations

- 4 Irregular or random variations

A Trend is simply a general direction of change in the data over many periods and it’s the long-term pattern in the data. The trend usually appears for a certain amount of time, after which it disappears or changes direction. For example, in financial markets, a ‘Bullish Trend’ indicates an upward trend where the prices of financial assets rise in general, while a ‘Bearish Trend’ indicates a decline in the prices.

Broadly, a trend in time series can be:

- Upward trend: a time series increases over an observed period.

- Downward trend: a time series decreases over an observed period.

- Constant or horizontal trend: a time series doesn’t significantly rise or fall over an observed period.

Seasonal variations or seasonality is an important component to consider when looking at a time series because it can provide information about what might happen in the future based on past data. It refers to the variation in the value of a measure over the course of one or more seasons, such as winter and summer months but also might be on a daily, weekly, or monthly basis. For example, the temperature has a seasonal behavior because it is higher in summer and lower in winter.

In contrast to seasonal variations, cyclic variations don’t have precise time periods and might have some drifts in time. For instance, financial markets tend to cycle between periods of high and low values, but there is no predetermined period of time between them. Besides that, a time series can have both seasonal and cyclic variations. For instance, it’s known that the real estate market has both cyclic and seasonal patterns. The seasonal pattern shows that there are more transactions in the spring rather than in the summer. The cyclic pattern reflects the purchasing power of the people, which means that in a crisis there are fewer sales in contrast to the time when there is prosperity.

Irregular or random variations are what remain after trend, seasonal and cyclic components are removed. Because of that, it’s also known as the residual component. This is a non-systematic part of a time series that is completely random and can’t be predicted.

In general, time series are often used in many fields such as economics, mathematics, biology, physics, meteorology, etc. Concretely, some examples of time series data are:

- The Dow Jones Industrial Average index prices

- The temperature in New York City

- Bitcoin price

- ECG signals

- Google trends of the term MLOps

- The unemployment rate in the USA

- Website traffic through time and similar

In this article, we will take a look at a few of the aforementioned examples.

Examples of time series projects

Stock market prediction

Stock market forecasting is a challenging and attractive topic where the main goal is to develop diverse methods and strategies for predicting future stock prices. There are a lot of different techniques, from classic algorithmic and statistics methods up to complex neural network architectures. The common thing is that they all utilize different time series to achieve accurate forecasts. Stock market forecasting methods are widely used by amateur investors, fintech startups, and big hedge funds.

There are many ways to use stock market forecasting methods in practice, but the most popular is probably trading. The number of automatic trading on stock exchanges is on the rise, and it’s estimated that about 75% of stocks traded on US stock exchanges come from algorithmic systems. There are two main approaches to predicting how stocks will perform in the future: fundamental analysis and technical analysis.

Fundamental analysis looks at factors such as a company’s financial statements, management, and industry trends. Also, it takes into account some macroeconomic indicators such as inflation rate, GDP, state of the economy, and similar. All these indicators are time-dependent and in that way can be represented as time series.

In contrast to fundamental analysis, technical analysis uses patterns in trading volume, price changes, and other information from the market itself to predict how stocks will perform in the future. It’s important for investors to understand both approaches before making an investment decision.

Bitcoin price forecasting

Bitcoin is a digital currency that has significant fluctuations in price. It’s also one of the most volatile assets in the world. The price of bitcoin is determined by supply and demand. When demand for bitcoins increases, the price increases, and when demand falls, the price falls. As demand has increased in recent years, so has the price. Because of its very volatile nature, it is a very challenging task to forecast bitcoin’s future prices.

In general, this problem is very similar to stock market prediction, and almost the same methods can be used to solve it. Even bitcoin has been shown to correlate with some indices such as S&P 500 and Dow Jones. It means that the bitcoin price, to some degree, follows the prices of the mentioned indices. You can read more about this here:

ECG anomaly detection

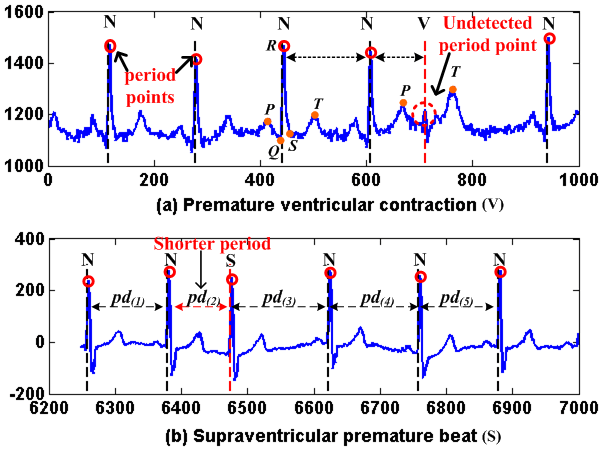

ECG anomaly detection is a technique that detects the abnormalities in an ECG. The ECG is a test that monitors the electrical activity of the heart. Basically, it is an electrical signal generated by the heart and represented as a time series.

The ECG anomaly detection is done by comparing the normal pattern of an ECG with the abnormal pattern. There are many types of anomalies in an ECG, and they can be classified as follows:

- Heart rate anomalies: this refers to any change in heart rate from its normal range. This may be due to a problem with the heart or a problem with how it is being stimulated.

- Heart rhythm anomalies: this refers to any change in rhythm from its normal pattern. This may be due to a problem with the way that impulses are being conducted through the heart or problems with how quickly they are conducted through it.

A lot of work has been done on this topic, ranging from academic research to commercial ECG machines, and there are some promising results. The biggest issue is that the system should have a high level of accuracy and should not have any false positives or negatives. This is due to the nature of the problem and the consequences of the wrong prediction.

Tools, packages, and libraries for time series projects

Since now we have some background regarding the importance of time series in the industry, let’s take a look at some popular tools, packages, and libraries that can be helpful for any time series project. Also, due to the fact that the majority of data science and machine learning projects related to time series are done in Python, it makes sense to discuss tools supported by Python.

We will discuss tools from majorly four categories:

- 1 Data preparation and feature engineering tools

- 2 Data analysis and visualization packages

- 3 Experiment tracking tools

- 4 Time series forecasting packages

Data preparation and feature engineering tools for time series

Data preparation and feature engineering are two very important steps in the data science pipeline. Data preparation is typically the first step in any data science project. It’s the process of getting data into a form that can be used for analysis and further processing.

Feature engineering is a process of extracting features from raw data to make it more useful for modelling and prediction. Below, we’ll mention some of the most popular tools used for these tasks.

Time series projects with Pandas

Pandas is a Python library for data manipulation and analysis. It includes data structures and methods for manipulating numerical tables and time series. Also, it contains extensive capabilities and features for working with time series data for all domains.

It supports data input from a variety of file types, including CSV, JSON, Parquet, SQL database tables and queries, and Microsoft Excel. Also, Pandas allows various data manipulation features such as merging, reshaping, selecting, as well as data cleaning and wrangling.

Some useful time series features are:

- Date range generation and frequency conversions

- Moving window statistics

- Moving window linear regressions

- Date shifting

- Lagging and many more

More related content for time series can be found below:

Time series projects with NumPy

NumPy is a Python library that adds support for huge, multi-dimensional arrays and matrices, as well as a vast number of high-level mathematical functions that may be used on these arrays. It has a very similar syntax to MATLAB and includes a high-performance multidimensional array object as well as capabilities for working with these arrays.

NumPy’s datetime64 data type and arrays enable an extremely compact representation of dates in time series. Using NumPy also makes it simple to do various time series operations using linear algebra operations.

NumPy documentation and tutorials:

Time series projects with Datetime

Datetime is a Python module that allows us to work with dates and times. This module contains the methods and functions required to handle the scenarios such as:

- Representation of dates and times

- Arithmetic of dates and times

- Comparison of dates and times

Working with time series is simple using this tool. It allows users to transform dates and times into objects and manipulate them. For example, with only a few lines of code, we may convert from one DateTime format to another, add a number of days, months, or years to date, or calculate the difference in seconds between two-time objects.

Useful documentation around how to get started with this module:

Time series projects with Tsfresh

Tsfresh is a Python package. It automatically calculates a large number of time series characteristics, known as features. The package combines established algorithms from statistics, time series analysis, signal processing, and non-linear dynamics with a robust feature selection algorithm to provide systematic time series feature extraction.

The Tsfresh package includes a filtering procedure to prevent the extraction of irrelevant features. This filtering procedure assesses each characteristic’s explaining power and significance for the regression or classification tasks.

Some examples of advanced time series features are:

- Fourier transform components

- Wavelet transform

- Partial autocorrelation and others

More about the Tsfresh package can be found below:

Data analysis and visualization packages for time series

Data analysis and visualization packages are tools that help data analysts to create graphs and charts from their data. Data analysis is defined as the process of cleaning, transforming, and modelling data in order to uncover useful information for business decisions. The goal of data analysis is to extract useful information from data and make decisions based on that information.

The graphical representation of data is known as data visualization. Data visualization tools, which use visual elements such as charts and graphs, provide an easy way to see and understand trends and patterns in data.

There is a wide range of data analysis and visualization packages for time series and we’ll go through a few of them.

Time series projects with Matplotlib

Probably the most popular Python package for data visualization is Matplotlib. It’s used for creating static, animated, and interactive visualizations. With Matplotlib it’s possible to do some things such as:

- Produce plots suitable for publication

- Create interactive figures that can be zoomed in, panned, and updated

- Change the visual style and layout

Also, it provides a variety of options for drawing time series charts. More about it is on the link below:

Time series projects with Plotly

Plotly is an interactive, open-source, and browser-based graphing library for Python and R. It’s a high-level, declarative charting library with over 30 chart types, including scientific charts, 3D graphs, statistical charts, SVG maps, financial charts, and more.

Besides that, with Plotly it’s possible to draw interactive time series-based charts such as lines, gantts, scatter plots, and similar. More about this package is presented in the documentation:

Time series projects with Statsmodels

Statsmodels is a Python package that provides classes and functions for estimating a wide range of statistical models, as well as running statistical tests and statistical data analysis.

We’ll cover in more detail this library in the section about forecasting but here it’s worth mentioning that it provides a very convenient method for time series decomposition and its visualization. With this package, we can easily decompose any time series and analyze its components such as trend, seasonal components, and residual or noise. More about that is described in the tutorial:

Experiment tracking tools for time series

Experiment tracking tools are usually high-level tools that can be used for a variety of purposes like tracking the results of an experiment, showing what would happen if one changed the parameters in an experiment, model management, and similar.

They are typically more user-friendly than low-level packages and can save a significant amount of time when developing machine learning models. Only two of them will be mentioned here, as they are most likely the most popular ones.

For time series, it’s especially important to have a convenient environment for tracking defined metrics and hyperparameters, since it’s most likely that we would need to run a lot of different experiments. Usually, time series models are not big in comparison to some convolution neural networks and as an input have a few hundred or thousand numerical values, so models train pretty fast. Also, they often require quite some time for hyperparameter tuning.

Finally, it would be very beneficial to connect in one place models from different packages as well as visualization tools.

Time series projects with neptune.ai

neptune.ai is an experiment tracker designed with a strong focus on collaboration and scalability. It lets you monitor months-long model training, track massive amounts of data, and compare thousands of metrics in the blink of an eye. The tool is known for its user-friendly interface and flexibility, enabling teams to adopt it into their existing workflows with minimal disruption. Neptune gives users a lot of freedom when defining data structures and tracking metadata.

Data scientists and ML/AI researchers can log, store, organize, display, compare, and query all their model-building metadata in a single place. Neptune handles data such as model metrics and parameters, model checkpoints, images, videos, audio files, dataset versions, and visualizations. As for any type of data, Time-Series are not an exception and any project with them can be tracked on Neptune.

Time series projects with Weights & Biases

Weights & Biases (W&B) is a machine learning platform, similar to neptune.ai, aimed at developers to help them build better models faster. It’s intended to support and optimize key MLOps life cycle steps such as model management, experiment tracking, and dataset versioning.

As neptune.ai, this tool can be useful during work with Time-Series projects, providing useful features for tracking and managing Time-Series models. More about Weights & Biases is presented in their documentation.

Time series forecasting packages

Probably the most important part of the time series project is forecasting. Forecasting is the process of predicting future events based on current and past data. It’s based on the assumption that the future can be realized from the past. Also, it assumes that there are some patterns in the data that can be used to predict what will happen next.

There are many methods for time series forecasting, starting from simple ones such as linear regression and ARIMA based, up to complex multilayer neural networks or ensemble models. Here, we’ll present some packages that support different kinds of models.

Time series forecasting with Statsmodels

Statsmodels is a package that we’ve already mentioned in the section about data visualization tools. However, this is a more relevant package for forecasting. Basically, this package provides a range of statistical models and hypothesis tests.

Statsmodels package also includes model classes and functions for time series analysis. Autoregressive moving average models (ARMA) and vector autoregressive models (VAR) are examples of basic models. Markov switching dynamic regression and autoregression are examples of non-linear models. It also includes time series descriptive statistics such as autocorrelation, partial autocorrelation function, and periodogram, as well as the theoretical properties of ARMA or related processes.

How to get started with time series using the Statsmodels package is described below:

Time series forecasting with Pmdarima

Pmdarima is a statistical library that facilitates the modelling of time series using ARIMA-based methods. Aside from that, it has other features such as:

- A set of statistical tests for stationarity and seasonality

- Various endogenous and exogenous transformers including Box-Cox and Fourier transformations

- Decompositions of seasonal time series, cross-validation utilities, and other tools

Maybe the most useful utility of this library is the Auto-Arima module that searches over all possible ARIMA models within the constraints provided and returns the best one, based on either AIC or BIC value.

More about Pmdarima is presented here:

Time series forecasting with Sklearn

Sklearn or Scikit-Learn is for sure one of the most commonly used machine learning packages in Python. It provides various classification, regression, and clustering methods including random forest, support vector machine, k-means, and others. Besides that, it provides some utilities related to dimensionality reduction, model selection, data preprocessing, and much more.

In addition to various models, for time series there are also available some useful functionalities such as pipelines, time series cross-validation functions, diverse metrics for measuring results, and similar.

More about this library can be found below:

Time series forecasting with PyTorch

PyTorch is a Python-based deep learning library for fast and flexible experimentation. It was originally developed by researchers and engineers working on Facebook’s AI research team and then open-sourced. Deep learning software such as Tesla Autopilot, Uber’s Pyro, and Hugging Face’s Transformers are built on top of PyTorch.

With PyTorch, it’s possible to build powerful recurrent neural network models such as LSTM and GRU and forecast time series. Also, there is a PyTorch Forecasting package with state-of-the-art network architectures. It also includes a time series dataset class that abstracts handling variable transformations, missing values, randomized subsampling, multiple history lengths, and other similar issues. More about this is presented below:

Time series forecasting with Tensorflow (Keras)

TensorFlow is an open-source software library for machine learning, based on data flow graphs. It was originally developed by the Google Brain team for internal use, but later it was released as an open-source project. The software library provides a set of high-level data flow operators that can be combined to express complex computations involving multidimensional data arrays, matrices, and higher-order tensors in a natural way. It also provides some lower-level primitives such as kernels that are used to construct custom operators or to speed up the execution of common operations.

Keras is a high-level API that is built on top of TensorFlow. Using Keras and TensorFlow it is possible to build neural network models for time series forecasting. One example of a time series project using weather time series data set is explained in the tutorial below:

Time series forecasting with Sktime

Sktime is an open-source Python library for time series and machine learning. It includes the algorithms and transformation tools needed to solve time series regression, forecasting, and classification tasks efficiently. Sktime was created to work with scikit-learn and make it easy to adapt algorithms for interrelated time series tasks as well as build composite models.

Overall, this package provides:

- State-of-the-art algorithms for time series forecasting

- Transformations for time series such as detrending or deseasonalization and similar

- Pipelines for models and transformations, model tuning utilities, and other useful functionalities

How to get started with this library is described here:

Time series forecasting with Prophet

Prophet is an open-source library released by Facebook’s Core Data Science team. Briefly, it consists of a procedure for forecasting time series data, based on an additive model that combines a few non-linear trends with yearly, weekly and daily seasonality, as well as holiday effects. It works best with time series that have strong seasonal effects and historical data from multiple seasons. It’s capable of handling missing data, trend shifts and outliers in general.

More about Prophet library is presented below:

Time series forecasting with Pycaret

PyCaret is an open-source machine learning library in Python that automates machine learning workflows. With PyCaret it’s possible to build and test several machine learning models with minimal effort and a few lines of code.

Basically, with minimal code, not going deep into the details, it’s possible to build an end-to-end machine learning project from EDA to deployment.

This library has some useful time series models among which are:

- Seasonal Naive Forecaster

- ARIMA

- Polynomial Trend Forecaster

- Lasso Net with deseasonalize and detrending options and many others

More about PyCaret can be found here:

Time series forecasting with AutoTS

AutoTS is a time series package for Python, designed to automate time series forecasting. It can be used to find the best time series forecasting model both for univariate and multivariate time series. Also, AutoTS itself clears the data from any NaN values or outliers.

Nearly 20 predefined models like ARIMA, ETS, VECM are available, and using genetic algorithms, it finds the best models, preprocessing, and ensembling for a given dataset.

Some tutorials about this package are:

Time series forecasting with Darts

Darts is a Python library that allows simple manipulation and forecasting of time series. It includes a wide range of models, from classics like ES and ARIMA up to RNN and transformers. All of the models can be used in the same way as in the scikit-learn package.

The library also allows easy backtesting of models, combining predictions from multiple models, and incorporating external data. It supports both univariate and multivariate models. The table of all available models as well as several examples can be found here:

Time series forecasting with Kats

Kats is a package released by Facebook’s Infrastructure Data Science team, intended to perform time series analysis. The goal of this package is to provide everything needed for time series analysis, including detection, forecasting, feature extraction/embedding, multivariate analysis, and so on.

Kats provides a comprehensive set of forecasting tools, such as ensembling, meta-learning models, backtesting, hyperparameter tuning, and empirical prediction intervals. Also, it includes features for detecting seasonalities, outliers, change points, and slow trend changes in time series data. With the TSFeature option, it’s possible to generate 65 features with clear statistical definitions that can be used in most machine learning models.

More about Kats package is described below:

Forecasting libraries comparison

In order to easily compare forecasting packages and have a high-level overview, here is a table with some common features. It shows some metrics such as GitHub stars, year of release, supporting features, and similar.

|

|

Year of release

|

GitHub stars

|

Statistics & econometrics

|

Machine learning

|

Deep learning

|

|

Statsmodels |

2010 |

7200 |

++ |

+ |

|

|

Pmdarima |

2018 |

1100 |

+ |

+ |

|

|

Sklearn |

2007 |

50000 |

+ |

++ |

+ |

|

PyTorch |

2016 |

55000 |

|

++ |

+ |

|

TensorFlow |

2015 |

164000 |

|

+ |

++ |

|

Sktime |

2019 |

5000 |

+ |

+ |

|

|

Prophet |

2017 |

14000 |

+ |

+ |

|

|

PyCaret |

2020 |

5500 |

+ |

+ |

+ |

|

AutoTS |

2020 |

450 |

+ |

+ |

|

|

Darts |

2021 |

3800 |

+ |

+ |

+ |

|

Kats |

2021 |

3600 |

+ |

+ |

|

Conclusion

In this post, we described the most commonly used tools, packages, and libraries for time series projects. With this list of tools, it’s possible to cover almost any project related to time series. On top of that, we provided a comparison of libraries for forecasting that shows some interesting stats, such as year of release, popularity level, and what kind of models it supports.

If you want to dive deeper into the area of time series, there is a collection of different packages that can be used to process time series: “Github: using Python to work with time series data“.

For those who would like to learn more about time series in general with a theoretical approach, the great choice would be the book “New Introduction to Multiple Time Series Analysis” by professor dr. Helmut Lütkepohl.